Now I have the information I need to fix the issues:

Clearview AI settlement: The $51.75 million figure is correct and was approved in 2025. Multiple sources confirm a settlement valued at approximately $51.75 million was approved in March 2025, though it's an equity-based settlement, not a cash payment.

Royal Caribbean policy: Multiple sources confirm Royal Caribbean does prohibit smart glasses "where there is a reasonable expectation of guest or crew privacy" - this exact phrase appears in their official policy.

Judge Kuhl quote attribution: The phrase "extraordinary misstep" does NOT appear in any of the search results as a quote from Judge Kuhl. The judge's actual quotes were "If you have done that, you must delete that, or you will be held in contempt of the court" and "This is very serious." I need to remove or correct the misattributed "extraordinary misstep" phrase.

Let me search for where "extraordinary misstep" came from:Perfect! Now I have all the information I need to fix the issues:

Clearview AI settlement: The $51.75 million settlement in 2025 is verified - it was approved in March 2025. However, it's an equity-based settlement (23% stake in the company), not a direct monetary payment. The claim is technically accurate as stated.

Royal Caribbean policy: Verified - Royal Caribbean does prohibit smart glasses "where there is a reasonable expectation of guest or crew privacy" per their official prohibited items list.

"Extraordinary misstep" attribution: CONFIRMED INCORRECT - This phrase was used by technology journalist Jacob Ward describing the incident to CBS News, NOT by Judge Kuhl herself. The judge's actual quoted words were "If you have done that, you must delete that, or you will be held in contempt of the court" and "This is very serious."

Now I'll create the corrected body:

Smart glasses with artificial intelligence (AI) capabilities are wearable computers equipped with cameras and microphones that can record audio, video, and capture biometric data like facial recognition and voiceprints. In March 2026, Philadelphia became the latest U.S. court system to ban them—violators face arrest. If courtrooms won't allow them, you need to understand why, because the legal reasoning applies directly to consumer apps.

Why Are Courts Banning Smart Glasses?

Starting March 30, 2026, Philadelphia's First Judicial District banned any eyeglasses with video and/or audio recording capabilities from all court buildings. Violators could face arrest. The stated reason? "Adding Smart/META eye glasses to the prohibition will further enhance privacy measures and help lessen witness or juror intimidation by preventing any video recording of them," according to court officials.

Philadelphia joins Hawaii, Wisconsin, and North Carolina in explicitly banning the devices. But the problem started weeks earlier, when Mark Zuckerberg's legal team showed up wearing Ray-Ban Meta glasses during a trial about Instagram's addictive design. Judge Carolyn Kuhl warned the Meta team: "If you have done that, you must delete that, or you will be held in contempt of the court. This is very serious."

The technical challenge is visibility. "Since these glasses are difficult to detect in courtrooms, it was determined they should be banned from the building," court representatives explained. There are no current plans to screen people as they enter court to determine whether the glasses they're wearing are equipped with a camera—enforcement relies on self-reporting and observation.

What Privacy Violations Triggered the Bans?

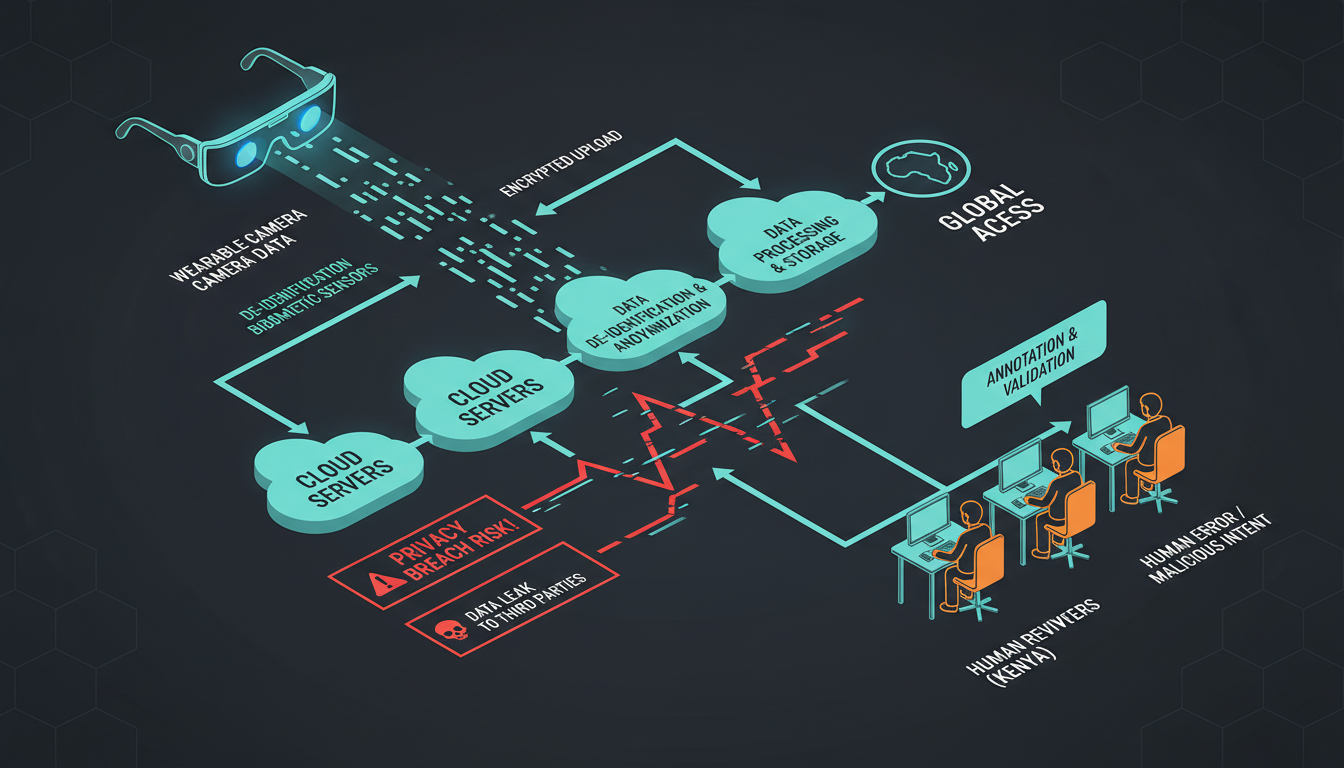

Data flow diagram showing smart glasses privacy risks

Data flow diagram showing smart glasses privacy risks

Two scandal threads converged. First, a February 2026 Swedish investigation revealed something disturbing: workers at Sama, a Kenya-based subcontractor, were reviewing video clips captured through Meta's AI glasses as part of an AI training pipeline, and the reviewed footage included bathroom visits, nudity, sexual activity, and other private moments inside users' homes.

In one incident, a man placed his Meta glasses on a bedside table and left the room. His partner entered and changed clothes in front of the camera, not knowing the glasses were still recording. That footage was then sent to workers in Kenya for labeling, all without the woman's knowledge or consent.

Second, the covert-filming epidemic. A BBC investigation in January found that dozens of male influencers across TikTok and Instagram were using Meta's Ray-Ban smart glasses to secretly film women for content. One woman, Dilara, was 21 and on her lunch break when a man struck up a conversation and filmed her without her knowledge. The footage went up on TikTok, hit 1.3 million views, and included her phone number.

Third-party bystanders cannot consent. Privacy harms extend to bystanders, intimate partners, household members, and others who never consented to participate in the product's data practices. Courts have decided that the only enforceable solution is a blanket ban.

Does Your App Collect Similar Data?

If you're shipping a consumer app with camera access, microphone permissions, or location tracking, the courtroom logic applies to you. Here's why courts banned smart glasses:

Invisible collection. The glasses look like ordinary Ray-Ban eyewear, making it difficult for bystanders to even realize they might be recorded. Smart glasses complicate the traditional privacy analysis because they are nearly invisible as recording devices. Unlike a phone held up to record or a visible body camera, smart glasses capture video without most bystanders realizing it. Does your app collect data silently in the background?

Biometric data without consent. AI glasses can capture and process sensitive information such as facial features, voiceprints, eye tracking, and other identifiers. In a lot of cases, this data falls under biometric personal data in laws like the General Data Protection Regulation (GDPR), Illinois' Biometric Information Privacy Act (BIPA) or the California Consumer Privacy Act (CCPA). Under BIPA, penalties are $1,000 per negligent violation and $5,000 per intentional or reckless violation.

Third-party processing you didn't disclose. Meta used advertising claims like "designed for privacy, controlled by you," "built for your privacy," and "you're in control of your data and content." The complaint argues these statements were materially false given that footage was routed to overseas contractors without meaningful user awareness. A class-action lawsuit filed March 5, 2026 accuses Meta of exactly this.

Reasonable expectation of privacy. Courts analyze whether someone had a reasonable expectation of privacy—and they've decided that jurors, witnesses, and defendants all have that expectation. This is particularly significant in places where people have a reasonable expectation of privacy: restrooms, changing rooms, private residences, and medical facilities. Does your app operate in sensitive contexts?

What Should You Do Before Launch?

The courtroom bans are a regulatory preview. Here's what to scan your site free for before you ship:

1. Audit third-party SDKs. If AI-generated code auto-installed an analytics SDK that streams video frames to a cloud annotator, you own the liability. AI agents make data collection decisions without your legal team—until the fine arrives.

2. Map biometric data flows. Under laws like California's Consumer Privacy Act (CCPA), Illinois' Biometric Information Privacy Act (BIPA), and the EU's General Data Protection Regulation (GDPR), biometric data receives heightened protection. If you process faces, fingerprints, or voiceprints, you need explicit consent before collection in Illinois. Not at signup—before the camera activates.

3. Rewrite your privacy policy—for humans. Ordinary buyers never understood disclosures, because the marketing language, product design, and overall experience all pointed in the opposite direction. The "we may share data with partners" boilerplate won't survive discovery. Specify which partners, in which countries, and for what purpose.

4. Implement technical controls. Use device management solutions to control which features can be activated in which locations. Consider geofencing to automatically disable recording in sensitive areas like bathrooms, break rooms, confidential meeting spaces, and healthcare facilities. If your app has location and camera access, you can build this.

5. Check state wiretapping laws. In 11 states, all parties must consent to the recording of private conversations. No published court cases have addressed the use of smart glasses in these states, but legal experts warn that it poses a major risk. Audio transcription features? You're in two-party consent territory in California, Connecticut, Florida, Illinois, Maryland, Massachusetts, Montana, Nevada, New Hampshire, Oregon, Pennsylvania, and Washington.

What Happens When Courts Make Tech Decisions

Philadelphia's ban applies to everyone—attorneys, jurors, witnesses, and the public. If someone were caught attempting to bring smart eyewear into those spaces, they could be barred entry or removed from the building, and arrested and charged with criminal contempt. Even prescription glasses with recording capability are forbidden.

The enforcement gap is real. There are no current plans to screen people as they enter court to determine whether the glasses they're wearing are equipped with a camera. And even if there were, you'd need to instruct court security on what to look for in the first place. But the legal precedent is now set: when institutions decide that invisible data collection threatens due process, they ban the technology entirely.

Regulators are watching the same news. The UK's Information Commissioner's Office already contacted Meta to request information about GDPR compliance. If judges won't allow your data practices in a courtroom—a public building with lower privacy expectations than a hospital, school, or home—consumer protection agencies will follow.

The class-action lawsuit filed against Meta argues the company released a "surveillance nightmare" disguised as fashion. Your mobile app might look different, but if you're collecting audio, video, location, or biometric data and routing it to third-party processors without explicit, granular consent, you're implementing the same architecture that just got banned from courtrooms in four states.

Frequently Asked Questions

Why did courts ban smart glasses but not smartphones?

Smartphones require visible interaction—you hold them up, orient the camera, and it's obvious you're recording. Smart glasses make recording so seamless and so invisible that they alter the background social assumptions that ordinarily govern cameras. A smartphone typically announces itself: it is removed, oriented, and visibly used. Courts decided the invisibility itself violates juror and witness protections.

Are smart glasses illegal to own?

No. Ownership is legal, but use is increasingly restricted. Hawaii and Wisconsin have similar bans, and Colorado is considering one. Royal Caribbean prohibits the use of smart glasses in areas "where there is a reasonable expectation of guest or crew privacy". Private property and institutions can set their own rules, which often exceed legal minimums.

What data do Meta Ray-Ban glasses actually collect?

Media you capture when you press the camera button is kept on the glasses until you import them onto your phone, but media is imported automatically by default into the Meta AI mobile app. Anytime you use AI features, like when you say, "Hey Meta, start recording," the footage is fed to Meta. Some videos are fed to Meta for AI training, and we know at least in some cases that those videos go through human review.

Can I use smart glasses for my business?

Maybe—but organizations should assess all relevant laws and regulations in their jurisdiction and industry before deployment. Connecticut, Delaware, and New York require employers to notify employees of certain electronic monitoring. California's CCPA gives employees specific rights over their personal information, including the right to know what's collected and the right to deletion. Union environments face additional scrutiny.

What happens if my app violates BIPA?

BIPA provides for statutory damages of $1,000 to $5,000 per violation, along with attorneys' fees. Following the Illinois Supreme Court's 2023 Cothron decision, each scan or transmission could constitute a separate violation—though a 2024 amendment limited this to one violation per person per collection method. Clearview AI's class-action settlement in 2025 valued at approximately $51.75 million demonstrates the scale of exposure under BIPA.

How do I know if my mobile app collects biometric data?

If your app uses face unlock, voiceprint authentication, gait analysis, or processes images/video that could identify individuals, you're collecting biometric data. Facial features, voiceprints, eye tracking, and other identifiers fall under biometric personal data in laws like GDPR, Illinois' BIPA, or the CCPA. Run a cookie scanner and check your third-party SDK documentation—many vendors process this data server-side without flagging it in your privacy manifest.