Autonomous code generation powered by AI agents is technology that enables artificial intelligence systems to write, modify, and deploy code independently—making decisions about third-party integrations, API calls, and data flows without waiting for explicit human approval at each step. When an agent installs a package, wires up an SDK, or adds a tracking script to close a GitHub issue, it's also making compliance decisions your legal team never reviewed.

What Privacy Risks Do AI Coding Agents Actually Create?

AI agents select known-vulnerable package versions more often than humans (2.46% vs. 1.64%), according to a January 2026 study analyzing 117,062 dependency changes across seven ecosystems. But version risk is only part of the picture.

The compliance problem runs deeper. Autonomous installation routines expose projects to malicious package injection, and agents can open files, call APIs, install dependencies, and modify repositories without human checkpoints. When agents retrieve sensitive data, invoke tools, and take action using real identities and permissions, failure becomes an automated sequence of access, execution, and downstream impact.

Under the General Data Protection Regulation (GDPR), the app publisher is the data controller responsible for all personal data processing within their application—including processing by third-party SDK code—and the publisher cannot delegate that responsibility. The Microsoft Security Blog notes that a system can be "working as designed" while still taking steps a human would be unlikely to approve, because boundaries were unclear, permissions were too broad, or tool use was not tightly governed.

Every auto-installed analytics SDK is a new data processor relationship. Every feature flag service is a new data flow. Your agent closed 47 tickets last sprint—how many created GDPR Article 28 obligations you don't have contracts for?

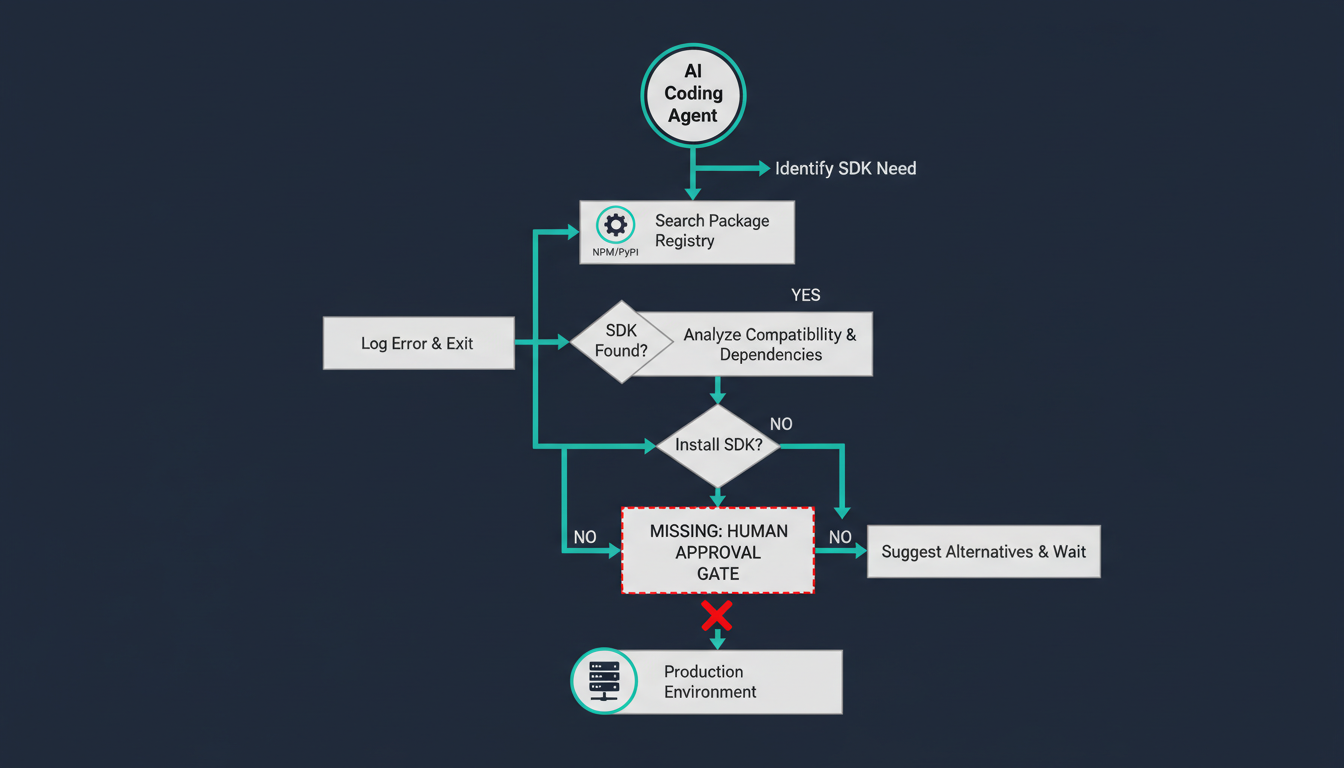

How Do Autonomous Agents Bypass Normal SDK Review?

AI agent SDK installation workflow without compliance review

AI agent SDK installation workflow without compliance review

Agents widen the blast radius because they often hold more credentials than a typical developer environment and install packages without human review; the poisoned nx/debug release on npm was automatically installed by coding agents, as documented by Oligo Security in their September 2025 analysis.

When developers grant AI agents permission to modify projects and install dependencies autonomously, a typo-squatted package can instantly compromise the local machine and, by extension, CI pipelines, repositories, and entire organizations. A February 2026 npm worm campaign specifically targeted AI coding agents and IDEs, attempting to add malicious MCP configurations that steal LLM API keys and SSH keys.

Traditional approval gates assume a human reads the diff. Agentic workflows assume the agent's judgment is the approval. AI coding assistants often produce code that mishandles personal information, such as insecure credential storage and insufficient anonymization, and can inadvertently reproduce data-handling practices inconsistent with privacy laws like GDPR, per a January 2026 systematic literature review.

When Cursor or Claude Code autonomously adds @vercel/flags or mixpanel-browser to package.json, it just created a California Consumer Privacy Act (CCPA) service provider relationship—complete with the requirement for a written contract specifying data processing terms. Data controllers and processors must sign written contracts outlining rights and responsibilities, and data processors may act only on the controller's documented instructions.

Does the CCPA Apply to AI Agent Decisions?

Automated Decision-Making Technology (ADMT) under the CCPA is defined as any technology that processes personal information and uses computation to replace or substantially replace human decision-making, and applies to significant decisions affecting employment, housing, credit, education, healthcare, or access to essential services. California's ADMT regulations took effect January 1, 2027, requiring businesses to give consumers notice when using ADMT in making significant decisions, allow opt-out, and respond to access requests.

If an AI agent is autonomously deciding which feature flag SDK to install, which A/B testing vendor to integrate, or how to instrument user tracking—and those decisions affect what personal data gets collected and where it flows—you're in grayer territory than most compliance teams have mapped.

Both controller and processor can be held legally responsible, but the controller's oversight will almost always come under scrutiny, and the controller's responsibility begins before the processor ever starts processing data. The IAPP notes that distinguishing processors from third parties remains a challenge even for established workflows.

What Should Engineering Teams Do Before Deploying Agents?

Treat agent-installed dependencies as supply chain events. Require packages to come from a vetted list, flag new dependencies for human review, reject packages below a popularity or age threshold, and provision agents with scoped, short-lived credentials that only cover what the current task requires, recommends package security researcher Andrew Nesbitt.

Audit what your agents already installed. Run a diff between your current package.json / requirements.txt / go.mod and the state before you enabled autonomous coding agents. Every new third-party integration is a potential data processor. Your Record of Processing Activities under GDPR Article 30 must include each SDK-driven processing activity as a distinct entry, with the vendor named as processor, legal basis documented, data categories listed, and retention period specified.

Gate integration changes behind compliance review. If an agent wants to add Sentry, Segment, Amplitude, or any SDK that touches user data, require a human sign-off that includes: Does a Data Processing Agreement exist? Is this vendor in our privacy policy? Does the user have a consent mechanism?

Log agent actions with compliance metadata. Log every package install, version resolution, and registry query an agent makes, and diff against expected behavior—if an agent asked to fix a CSS bug runs npm install crypto-utils, that should page someone. The same logic applies to SDK installations.

Scan your site to see what third-party data flows already exist—before an agent adds ten more you didn't approve.

Why This Gets Worse in 2027

The regulatory environment of late 2026 will not tolerate the "move fast and break things" approach that characterized early AI deployment; executive liability for autonomous systems is now a settled principle, according to a December 2025 analysis of agentic AI threat models. Organizations deploying autonomous coding agents need to address compliance proactively, because the regulatory landscape will tighten significantly through 2026 and 2027.

GDPR enforcement has already shown that "we didn't know our SDK was doing that" is not a defense. CNIL has explicitly stated that it holds app owners accountable for their partners' data practices; an SDK vendor's compliance failure that you enabled through poor governance is your compliance failure.

When that SDK was installed by an autonomous agent you gave write access to your repo, the governance failure is even clearer. The agent didn't understand GDPR. You gave it commit access anyway.

Frequently Asked Questions

Q: Are AI coding agents considered automated decision-making technology under privacy laws?

A: It depends on what decisions they're making. If an agent is autonomously choosing which data collection SDKs to integrate or how to instrument user tracking, it may meet the CCPA's ADMT definition. The key question is whether the agent substantially replaces human judgment on decisions affecting personal data processing.

Q: Who is liable when an AI agent installs a non-compliant third-party SDK?

A: Under GDPR, the data controller (typically the company deploying the app) remains responsible for all processing activities, including those performed by third-party code. You cannot delegate legal accountability to the AI agent or the SDK vendor, though processors can face direct enforcement as well.

Q: Do we need Data Processing Agreements for every SDK an AI agent installs?

A: If the SDK processes personal data on your behalf (analytics, feature flags, error tracking, etc.), GDPR Article 28 requires a written DPA specifying processing purposes, data categories, security measures, and sub-processor relationships—regardless of whether a human or agent installed it.

Q: How can we tell what data collection decisions our AI agents have already made?

A: Audit your dependency changes since enabling autonomous agents. Compare package manifests, review agent commit logs, and use a cookie scanner or network analysis to identify third-party domains your app now contacts. Every new integration is a potential compliance gap.

Q: What's the biggest compliance risk with autonomous code generation?

A: Agents create data processor relationships faster than legal teams can review them. A single sprint can add five new third-party SDKs—each requiring contracts, privacy policy updates, consent mechanisms, and records of processing activities—without anyone outside engineering knowing it happened.

Q: Should we disable AI coding agents until we have compliance guardrails?

A: Not necessarily, but you should restrict their permissions. Allowlist approved packages, require human review for any new third-party dependency, and ensure agents cannot modify data collection code without compliance sign-off. Productivity gains aren't worth regulatory fines or breach notification obligations.